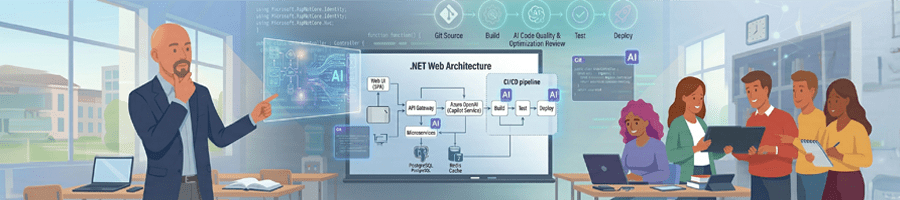

Course: CSCD 379 — .NET Web Development (300-level), Eastern Washington University, Winter 2026

Instructor: Grant Erickson (IntelliTect), with Meg Gravatt and Benjamin Michaelis

Approach: Full integration of AI tools across requirements, architecture, implementation, testing, and DevOps

Total Responses: 8

Overall Likert Average: 4.375 / 5.0

Series posts: If AI Writes the Code, What Should We Teach?, A Powerful Hope for the Future, Software Engineering Fundamentals Matter More Than Ever, and AI in Software Education: The Final Projects Are In

Per-Question Summary

Scale: 1 = Strongly Disagree / Very Ineffective, 5 = Strongly Agree / Very Effective

Q1 — AI Improved App Quality (Mean: 4.75)

Students overwhelmingly felt AI helped them produce higher-quality full-stack web applications (front-end, back-end, database, Azure deployment) than they could have without it. Seven of eight students rated this a 5; only two gave a 4 (Derek Stone and Nathan Cruz). The dominant theme was speed and leverage — students could iterate faster and polish more. No significant negative outliers.

Q2 — AI Freed Focus for Creativity (Mean: 4.625)

AI reduced the burden of repetitive coding, enabling students to focus on creative, innovative features. Five students gave a 5, three gave a 4. Multiple students mentioned rapid UI prototyping and the ability to quickly test user flows. No one felt AI hindered creativity.

Q3 — Worry About Over-Reliance on AI (Mean: 3.625)

This was the most polarized question. Two students (Nathan Cruz and Emma) rated their worry at 5 — the highest concern. Alex Reynolds rated it a 2 (least worried). The remaining students fell at 3–4. The tension: students acknowledge they may not code “from scratch” in the future, but some worry about foundational skill atrophy if they never exercise it.

Interesting outlier: Nathan explicitly described drifting toward “vibe coding” under deadline pressure, where the AI made architecture decisions instead of him. This was the most candid expression of the over-reliance concern.

Q4 — Enough Upfront Architecture Guidance (Mean: 4.50)

Most students felt they received sufficient architectural framing to prevent sloppy AI output. Nathan Cruz was the notable outlier at 3, feeling he needed more guardrails — consistent with his vibe-coding concern. Jordan Hayes also noted that early projects suffered from a lack of best-practice scaffolding, leading to “large page files with all the code together.”

Q5 — Requirements Felt More Important (Mean: 4.375)

Students noticed a clear shift: with AI handling code generation, the emphasis moved to nailing scope and requirements. Five students rated this a 5. Nathan Cruz was the low outlier at 3, which aligns with his sense of disconnection when AI drove too many decisions.

Q6 — Testing Was Essential (Mean: 4.00)

This question had the widest spread: Jordan Hayes and Emma rated it 5 (testing was crucial), while Tyler Brooks and Nathan Cruz rated it 2 (felt like extra work). The divide maps to workflow style — students who integrated testing early found it invaluable; those who iterated rapidly found tests trailing behind fast-moving implementations. Marcus Chen offered a nuanced insight: tests were sometimes written “with the intention of passing” rather than validating requirements.

Q7 — Final Project Felt Original (Mean: 4.375)

Despite heavy AI usage, most students felt their final projects reflected their own engineering decisions. Five students rated this a 5. Derek Stone and Nathan Cruz rated it 3 — Derek because he felt the engineering choices were less visible at the code level, and Nathan because deadline-driven vibe coding reduced his sense of ownership.

Q8 — AI Improved .NET Understanding (Mean: 3.75)

This was the second-lowest mean. While some students (Jordan Hayes, Emma, Marcus Chen at 5) felt AI deepened their understanding, others (Nathan Cruz at 2, Lily Park and Tyler Brooks at 3) leaned on AI for boilerplate without internalizing fundamentals. This question highlights the core pedagogical tension: AI can accelerate learning or bypass it, depending on the student’s intentionality.

Q9 — Prepared for AI-Using Workplace (Mean: 4.75)

Tied for the highest-rated question. Students strongly believe the course maps to modern professional practice where AI is ubiquitous. Seven students rated it 4 or 5. Nathan (4) was the only response below 5, and even he agreed.

Q10 — DevOps + AI Smoothed Deployment (Mean: 4.125)

Azure CI/CD with AI was a net positive, but friction surfaced. Jordan Hayes and Marcus Chen rated it 5; Nathan Cruz rated it 2 and Tyler Brooks rated it 3. Tyler specifically noted Azure help was “not very helpful” from AI. Emma described “chasing .NET errors in a loop” when AI got confused by Azure deployment steps.

Q11 — Confident Identifying Architectural Issues (Mean: 4.125)

Students generally felt capable of spotting and repairing structural issues in full-stack apps. Jordan Hayes, Alex, and Derek rated 5. Lily Park and Nathan Cruz rated 3 — the students who felt less connected to the architecture overall.

Q12 — Confident to Build/Deploy with AI at Work (Mean: 4.875)

The highest-rated question. Every single student rated this 4 or 5. Students left the course confident they could deliver a similar .NET full-stack app in a professional setting using AI tools. This is a powerful endorsement of the course’s practical value.

Q13 — Would Recommend the Course (Mean: 4.75)

Strong advocacy. Six students gave a 5; two gave a 4 (Alex and Derek). No student would discourage others from taking the AI-integrated format.

Q14 — Feel More Prepared for a CS Career (Mean: 4.625)

Students felt meaningfully more career-ready after this approach. Five students gave a 5; three gave a 4. The course’s industry orientation, creative project freedom, and real deployment experience all contributed.

Per-Question Means Table

| # | Question | Mean |

|---|---|---|

| Q1 | AI improved app quality | 4.750 |

| Q2 | AI freed focus for creativity | 4.625 |

| Q3 | Worry about over-reliance on AI | 3.625 |

| Q4 | Enough upfront architecture guidance | 4.500 |

| Q5 | Requirements felt more important | 4.375 |

| Q6 | Testing was essential | 4.000 |

| Q7 | Final project felt original | 4.375 |

| Q8 | AI improved .NET understanding | 3.750 |

| Q9 | Prepared for AI-using workplace | 4.750 |

| Q10 | DevOps + AI smoothed deployment | 4.125 |

| Q11 | Confident identifying architectural issues | 4.125 |

| Q12 | Confident to build/deploy with AI at work | 4.875 |

| Q13 | Would recommend the course | 4.750 |

| Q14 | Feel more prepared for CS career | 4.625 |

| Overall Average | 4.375 |

Individual Summaries by Student

Alex Reynolds

Experience: ~3 years of coding | AI in other classes: Yes | One word: “Parasitic”

Alex was one of the most enthusiastic respondents. He was staggered by the speed at which he could build complex components and ship applications. He rated AI’s impact on quality at 5/5 and was the least worried about over-reliance (2/5), suggesting strong confidence in his foundational skills. His biggest challenge was keeping AI up to date with project context across files and changes. He discovered a notable hallucination incident — AI created a fake version of his website inside a login page, which he caught through visual testing. He far preferred this course to the prior CSCD 378, calling it “freer” and more creatively enabling. His one-word description, “Parasitic,” is an interesting choice — acknowledging AI’s ability to embed deeply into workflow, for better or worse.

Tyler Brooks

Experience: 4 years of coding | AI in other classes: Yes | One word: “Empowering”

Tyler valued AI most for rapid UI creation — spinning up interfaces to test user flow and iterate quickly. His challenges were domain-specific: Azure help was weak from AI, and as codebases grew, AI introduced code duplication and poor file organization. He had to actively guide AI on package structure. Testing felt like extra work during rapid early development but became more valuable as features stabilized. He appreciated the course’s emphasis on design and process over niche code topics, and the industry-like expectations(“does it work?”). He requested more time for deeper projects and explicit guidance on when AI is beneficial vs. when it falls short.

Jordan Hayes

Experience: 4 years of coding | AI in other classes: Yes | One word: “Horse”

Jordan’s highest-rated response across the board (all 5s except Q3=3 and Q4=4). She valued AI primarily for development speed, which was critical given a heavy course load. Her biggest challenge was practical: running out of tokens in VS Code, forcing a copy/paste workflow to ChatGPT. She practiced a plan-then-generate prompting strategy — spending several rounds clarifying with AI before code generation. Testing was embedded in her workflow since AI generated tests alongside features. She strongly advocated for students starting in their own repos from the outset for better portfolio presentation. She also flagged that early projects suffered from a lack of best-practice structuring guidance, leading to messy monolithic files. Her one-word description, “Horse,” is charmingly unique and perhaps captures the workhorse nature of AI assistance.

Marcus Chen

Experience: 3 years of coding | AI in other classes: Yes | One word: “Modern”

Marcus focused on AI’s ability to reduce repetitive code and enable focus on bigger-picture problems. He offered one of the sharpest critiques: tests were sometimes written “with the intention of passing” rather than validating requirements — a subtle but important distinction for AI-generated test quality. He enjoyed the course’s emphasis on scope and architecture and appreciated having an industry instructor with real-world knowledge. His primary suggestion was around cost transparency — he paid for GitHub Pro, which significantly improved his experience, but not all students could afford it. He recommended standardizing the AI tool and providing a brief tutorial at the start.

Lily Park

Experience: ~3 years of coding | AI in other classes: Yes | One word: “Productive”

Lily valued AI for boilerplate acceleration and quick .NET examples, plus faster debugging. Her biggest challenge was ensuring AI suggestions matched project requirements and architecture — the generated code often worked but didn’t fit the existing structure. She actively guided AI away from quick fixes toward more maintainable solutions. Testing made her slow down and verify rather than blindly accepting AI output. She felt the class mirrored industry problem-solving — less “write from scratch,” more design and testing. She suggested more guidance on prompting and on critically reviewing AI-generated code. She noted she personally learns better from lectures than live demos, making it harder to review material later.

Nathan Cruz

Experience: 3 years of coding | AI in other classes: No | One word: “Dominant”

Nathan was the most self-critical and introspective respondent. He valued AI for time savings and gap-filling, but candidly admitted that deadline pressure pushed him toward “vibe coding” — letting AI steer architecture decisions, which left him feeling less ownership of the software. He was the only student to rate architectural guidance at 3/5 and requirements emphasis at 3/5. He was also concerned that AI-written tests might not verify real requirements. His ratings were consistently the lowest in the cohort, not out of dissatisfaction with the course, but out of honest self-assessment about his own AI dependency. He strongly advocated for more prompting instruction and prompt-first assignments — having students write structured prompts (“Build with this structure and this flow”) rather than simple commands (“build me Wordle”). This is arguably the most actionable student feedback in the entire survey.

Derek Stone

Experience: 8+ years of coding (on and off) | AI in other classes: No | One word: “Glass-ceiling-shattering!”

Derek brought the most experience to the class and it showed in his nuanced perspective. He valued AI for removing boilerplate time so he could apply his “smarter brain” to harder design questions. His primary concern was that the biggest risk with AI is not doing enough thoughtful design or not reviewing output — if you let AI answer design questions, “that can lead to bad results.” He advocated a plan-first approach: plan with AI, review the plan, then implement. Testing was sometimes necessary, sometimes felt like it held progress back — “that’s where you might get into trouble” with important applications. He felt the class was more design-focused and practical than traditional university courses. He suggested more pre-planned assignment structure while maintaining space for creative exploration. His one-word description captures his optimism perfectly.

Emma

Experience: 4 years of coding | AI in other classes: Yes | One word: “Very Good”

Emma was concise but insightful. She valued AI for speeding up feature implementation. Her biggest challenge was chasing .NET errors in loops and Azure deployment confusion — AI would get lost in circular debugging. Her standout moment was rejecting AI’s suggestion to build Gemini prompting in the Nuxt front-end, instead maintaining clean separation of duties with a C# service in the back-end. This kept her app “cleaner and easier to manage.” She was already comfortable with testing; AI made her “write more tests more betterer.” She felt less constrained creatively than in other classes and was highly motivated. She valued having an industry instructor who understood where the industry “currently stands” and wasn’t telling students “they won’t ever get a job if they can’t regurgitate all of the rules for deletion in a red-black tree.” Her one change: warn about bad prompting pitfalls.

Things That Went Well

- Speed and acceleration of development — Every student cited speed as a primary benefit. Boilerplate generation, rapid feature implementation, and quick iteration cycles were universally praised.

- Creative freedom and student-driven projects — Students loved building their own original web applications rather than uniform assignments. Multiple students said this was the most motivating aspect of the class.

- Industry perspective and realism — Having instructors from industry brought practical, up-to-date knowledge. Students felt the class prepared them for real workplaces where AI is ubiquitous. Multiple students contrasted this favorably with traditional academic courses.

- Emphasis on testing and verification — While opinions varied on whether testing felt like extra work, students universally acknowledged its importance for constraining AI errors and hallucinations.

- Architecture and design focus — The shift from syntax-focused instruction to architecture, requirements, and design thinking was widely appreciated. Students felt this was more aligned with real engineering work.

- DevOps and deployment practice — CI/CD with Azure and GitHub Actions gave students real deployment experience, despite some friction points.

- Rapid UI prototyping — AI-assisted front-end creation allowed students to quickly test user flows and iterate on design.

- Confidence and career readiness — Students left feeling significantly more prepared for professional software development. The highest-rated question (Q12: 4.875) was about confidence building and deploying with AI at work.

- Portfolio-worthy work — Students produced polished, deployable applications that showcased their engineering abilities.

- Student empowerment — When asked how AI made them feel, words like “empowering,” “productive,” “modern,” and “glass-ceiling-shattering” dominated.

Potential Changes for Next Time

1. Teach Prompt Engineering Early

Multiple students (Nathan, Lily, Emma, Jordan) requested explicit instruction on how to write effective prompts. Nathan’s suggestion is the most actionable: start with prompt-first assignments where students write structured prompts that guide AI on architecture and flow, rather than simple “build me X” commands. This could be introduced as early as Assignment 1.

2. Provide More Pre-Planned Structure

Derek and several others suggested more defined assignment structure and less improvisation. Design documents, architectural templates, and scaffolding guidance before coding could help students avoid vibe-coding. Balance structure with the creative freedom that students loved.

3. Teach When AI Helps vs. When It Hurts

Tyler specifically asked for guidance on where AI shines (boilerplate, test scaffolds, UI prototyping) and where to avoid it (architecture decisions, Azure configuration, complex refactors without context). This could be a recurring theme in lectures.

4. Start in Individual Repos

Jordan suggested students should build projects in their own repos from the start, rather than branches of a forked class repo. This would make portfolio presentation much easier.

5. Be Transparent About Tooling Costs

Marcus noted that GitHub Pro significantly improved his experience but not everyone could afford it. Discuss required tooling costs upfront, explore alternatives, and consider providing standardized AI tool access with a short onboarding tutorial.

6. Improve Azure/DevOps Guidance

Multiple students (Tyler, Emma) found AI unreliable for Azure deployment. Provide prebuilt pipeline templates, clarify common pitfalls, and set clear hand-off boundaries for front-end vs. back-end deployment.

7. Emphasize Requirement-Anchored Testing

Marcus’s insight that tests were sometimes written “to pass” rather than to validate requirements is critical. Teach students to write tests that trace back to requirements, not just achieve code coverage.

8. Address Hallucination Awareness

Alex discovered AI literally fabricated a fake website inside his login page. Emphasize code review, traceability, and visual verification as standard practice when working with AI.

9. Best-Practice Project Structure Earlier

Jordan noted that without early guidance, AI-dictated project structure led to messy monolithic files. Provide scaffold templates and coding conventions at the start of the course.

10. Consider Longer Timelines for Depth

Tyler and others wanted more time to polish and expand projects. The original final’s longer timeline was exciting — consider preserving or extending that.

One-Word Descriptions

Students were asked for one word to describe their AI experience:

| Student | One Word |

|---|---|

| Alex Reynolds | Parasitic |

| Tyler Brooks | Empowering |

| Jordan Hayes | Horse |

| Marcus Chen | Modern |

| Lily Park | Productive |

| Nathan Cruz | Dominant |

| Derek Stone | Glass-ceiling-shattering! |

| Emma | Very Good |

The range is fascinating — from “Parasitic” and “Dominant” (acknowledging AI’s pervasive influence) to “Empowering” and “Glass-ceiling-shattering” (celebrating its enabling power). These words collectively paint a picture of a tool that is powerful, transformative, and demanding of thoughtful management.

Background Information

| Student | Years of Experience | AI in Other Classes |

|---|---|---|

| Alex Reynolds | ~3 | Yes |

| Tyler Brooks | 4 | Yes |

| Jordan Hayes | 4 | Yes |

| Marcus Chen | 3 | Yes |

| Lily Park | ~3 | Yes |

| Nathan Cruz | 3 | No |

| Derek Stone | 8+ | No |

| Emma | 4 | Yes |

Average prior experience: approximately 4 years. Six of eight students used AI in other classes, suggesting broad AI adoption across the CS curriculum. The two who did not (Nathan and Derek) offered some of the most reflective feedback.

Executive Takeaways

- Career readiness with AI is extremely high (Q9=4.75, Q12=4.875). Students believe they can deliver professional outcomes using AI-assisted workflows.

- The pedagogical shift worked. Students strongly prefer the creative, architecture-first approach grounded in testing and DevOps over traditional syntax-focused instruction.

- The primary risk area is fundamentals and prompting. Students with less intentional AI usage reported lower ownership and understanding. The fix: teach prompt engineering patterns, provide design templates, and anchor tests to requirements.

- The course is highly recommended (Q13=4.75). Students advocate for continuing and expanding the AI-integrated format.

Designing AI systems you can rely on

If you are exploring how to use large language models in real software, IntelliTect helps you turn clear intent into reliable, production-ready systems.